Documentation Index

Fetch the complete documentation index at: https://api-docs.ollang.com/llms.txt

Use this file to discover all available pages before exploring further.

Who this is for — Product, operations, and engineering teams choosing

which Order Type fits a use case, and developers calling

Create Order. Each section

below explains the workflow, deliverables, and configuration knobs for

one Order Type. See Order Types reference

for the canonical

orderType enum values.Overview

Order Types define:- what localization workflow is being executed,

- which AI orchestration pipeline is triggered,

- what source assets are utilized,

- what deliverables are generated,

- and how reviewers, linguists, or studios interact with the output.

- AI orchestration,

- provider routing,

- workflow automation,

- assignment logic,

- deliverable generation,

- and optional human review operations.

- consume different AI providers,

- expose different editing interfaces,

- and generate different exportable outputs.

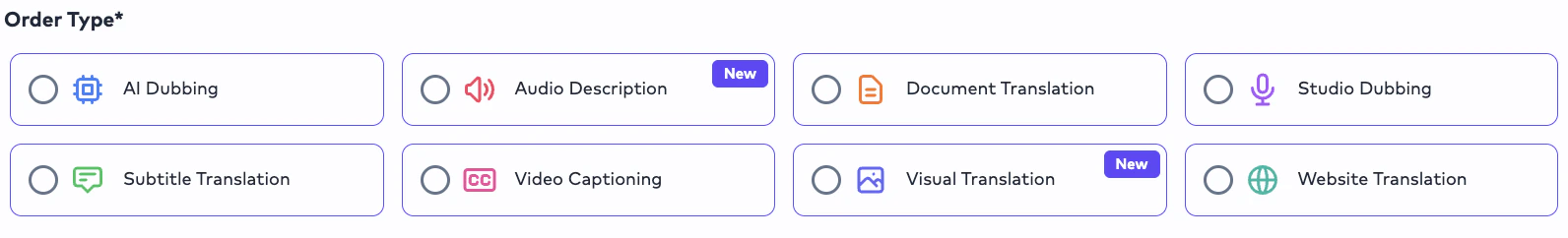

Available Order Types

The Ollang Project Management Dashboard currently supports:AI Dubbing

Generate multilingual dubbed audio and localized video deliverables using AI voice synthesis workflows.

Subtitle Translation

Translate subtitle assets and timed dialogue into one or multiple target languages.

Video Captioning (CC)

Generate transcription and caption files from source media.

Document Translation

Localize multilingual documents into one or multiple target languages.

Studio Dubbing

Coordinate external dubbing studios and professional voice production workflows.

Visual Translation

Localize on-screen text inside videos, graphics, presentations, and visual assets while preserving the original layout, positioning, and multilingual consistency.

Website Translation

Localize website content, UI strings, and structured localization files across multiple languages.

AI Dubbing

Overview

AI Dubbing is Ollang’s multilingual AI voice localization workflow. The workflow automatically orchestrates:- speech-to-text,

- subtitle generation,

- translation,

- voice synthesis,

- audio processing,

- and final deliverable generation.

- marketing videos,

- educational content,

- training materials,

- YouTube content,

- podcasts,

- enterprise localization,

- media distribution,

- and accessibility operations.

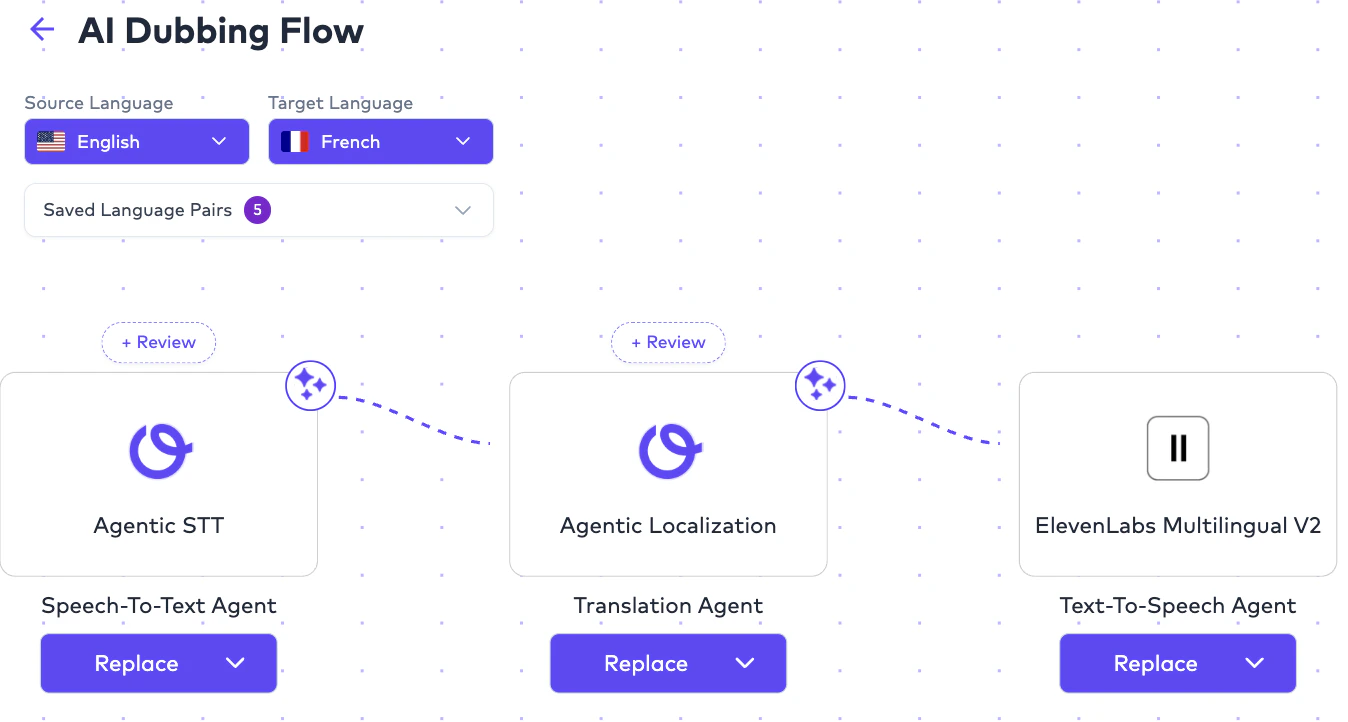

Core AI Dubbing Pipeline

A typical AI Dubbing workflow internally follows:- different AI providers,

- different workflow configurations,

- and different orchestration logic.

AI Dubbing Styles

AI Dubbing contains multiple dubbing styles. Users select the dubbing style during Order configuration. Supported dubbing styles currently include:Vocal Replacement

Replace original vocals with localized dubbed vocals.

Overdub

Preserve original audio underneath localized dubbed speech.

Audio Description

Generate accessibility narration describing visual actions and environments.

Vocal Replacement

Operational Behavior

Vocal Replacement replaces:- the original source vocals,

- localized/dubbed AI-generated vocals.

- pacing,

- speech timing,

- speaker continuity,

- and natural dialogue flow.

- multilingual training content,

- enterprise localization,

- dubbed media,

- and multilingual distribution.

Example Workflow

Overdub

Operational Behavior

Overdub workflows preserve:- the original source audio,

- localized dubbed vocals on top.

- documentaries,

- interviews,

- educational content,

- and narration-heavy workflows

Example

Overdub Example

Audio Description

Overview

Audio Description (AD) workflows generate narration describing:- visual actions,

- environments,

- scene transitions,

- gestures,

- and contextual visual information.

- accessibility localization,

- visually impaired audiences,

- compliance requirements,

- and inclusive media distribution.

Rules

For Audio Description workflows:- source language and target language are generally the same.

Long-Form Audio Description Support

The platform supports Audio Description workflows for:- short-form content,

- episodic media,

- enterprise training material,

- educational libraries,

- and long-form video content.

- 30-minute videos,

- 1-hour episodes,

- 2–3 hour productions,

- and enterprise accessibility libraries.

AI Dubbing Deliverables

AI Dubbing workflows may generate:Mixed Master Video

AI Dubbing Audio

AI Dubbing Audio (Vocals Only)

Created M&E

Created Source Vocals Only Audio

Audio Description Deliverables

When Audio Description dubbing style is selected, additional outputs may include:- AD Mixed Audio 5.1

- AD Narrator Audio 2.0

- AD Narrator Audio 5.1

- AD Mixed Audio 2.0

- AD Mixed Video 2.0

- Created M&E

- Created Source Vocals Only Audio

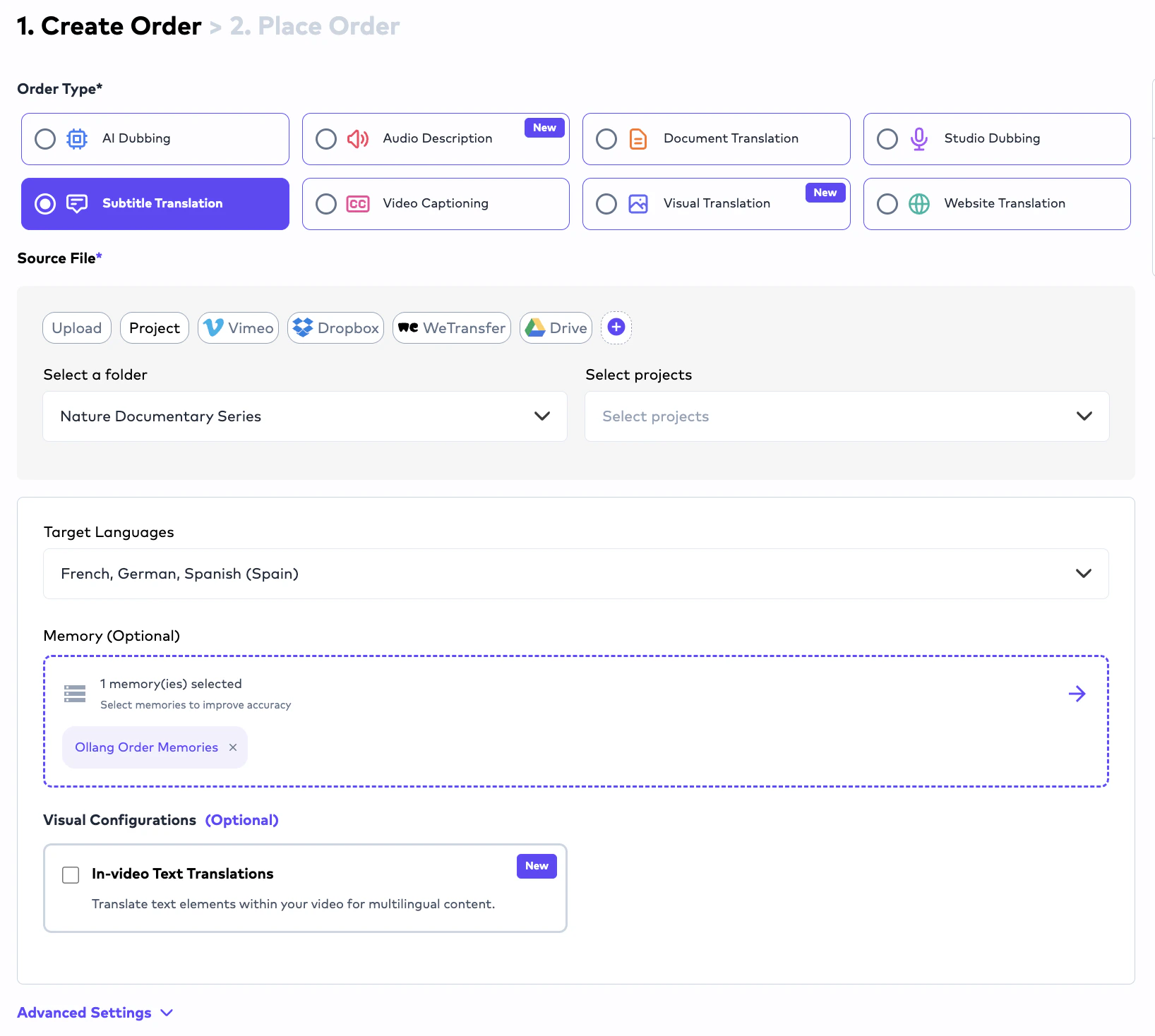

Subtitle Translation

Overview

Subtitle Translation workflows localize subtitle content from:- source videos,

- source subtitle files,

- or transcription outputs

Typical Subtitle Translation Workflow

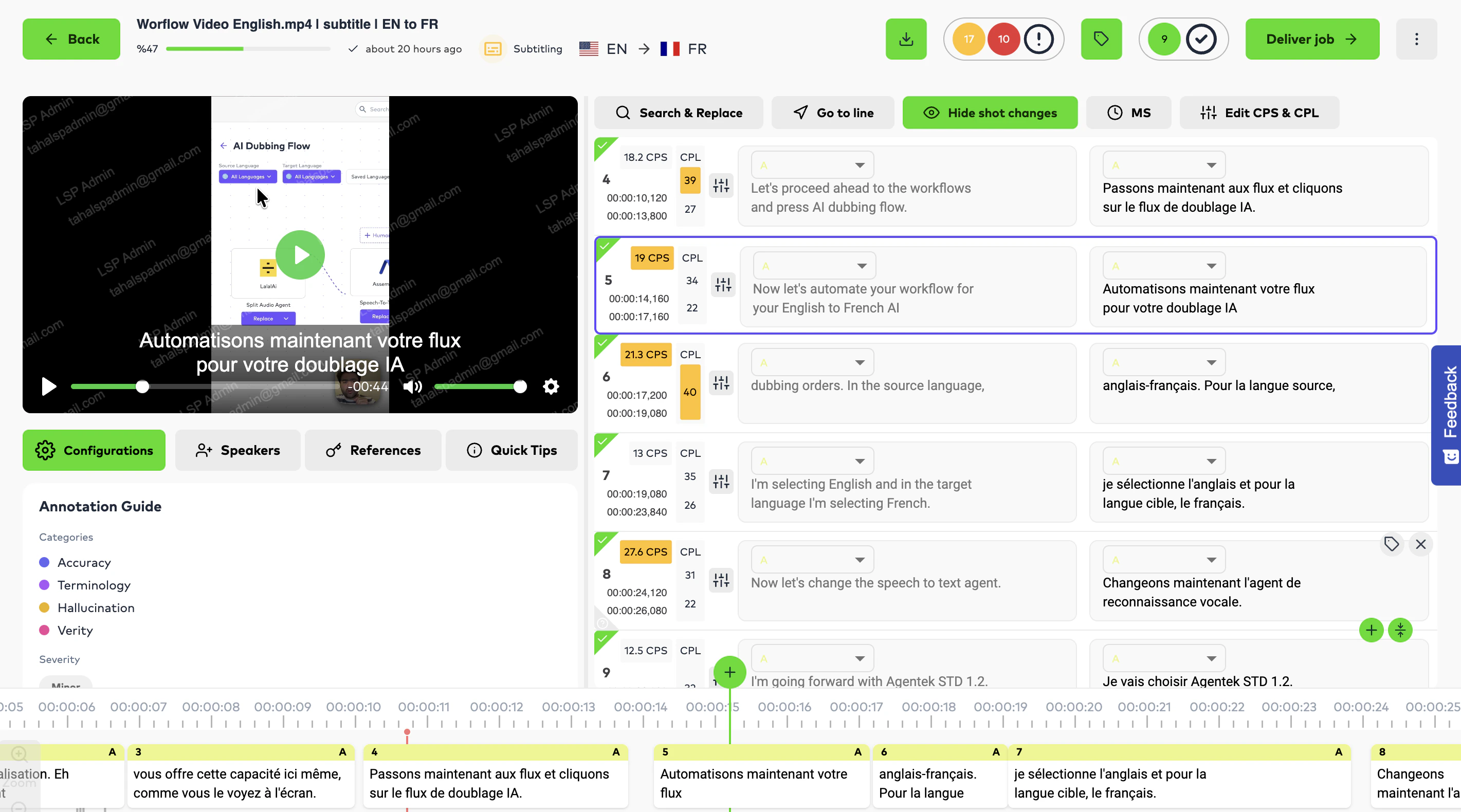

Subtitle Editing Capabilities

Inside the Editor Interface, linguists can:- edit subtitle text,

- modify timing,

- split subtitle segments,

- merge subtitle segments,

- optimize CPS/CPL compliance,

- reassign speakers,

- and refine readability or subtitle timing consistency.

Subtitle Export Formats

Completed Subtitle Translation workflows support exports including:SRT

VTT

STL

ITT - iTunes

SCC

DFXP

ASS

STYLIZED ASS

DOCX

XLSX

DUBBING SCRIPT

DUBBING SRT

FN Excluded Variants

The platform additionally supports:- SCC-FN Excluded,

- SRT-FN Excluded,

- DFXP-FN Excluded,

- and ASS-FN Excluded export variants.

- broadcast compatibility,

- formatting workflows,

- and enterprise subtitle distribution requirements.

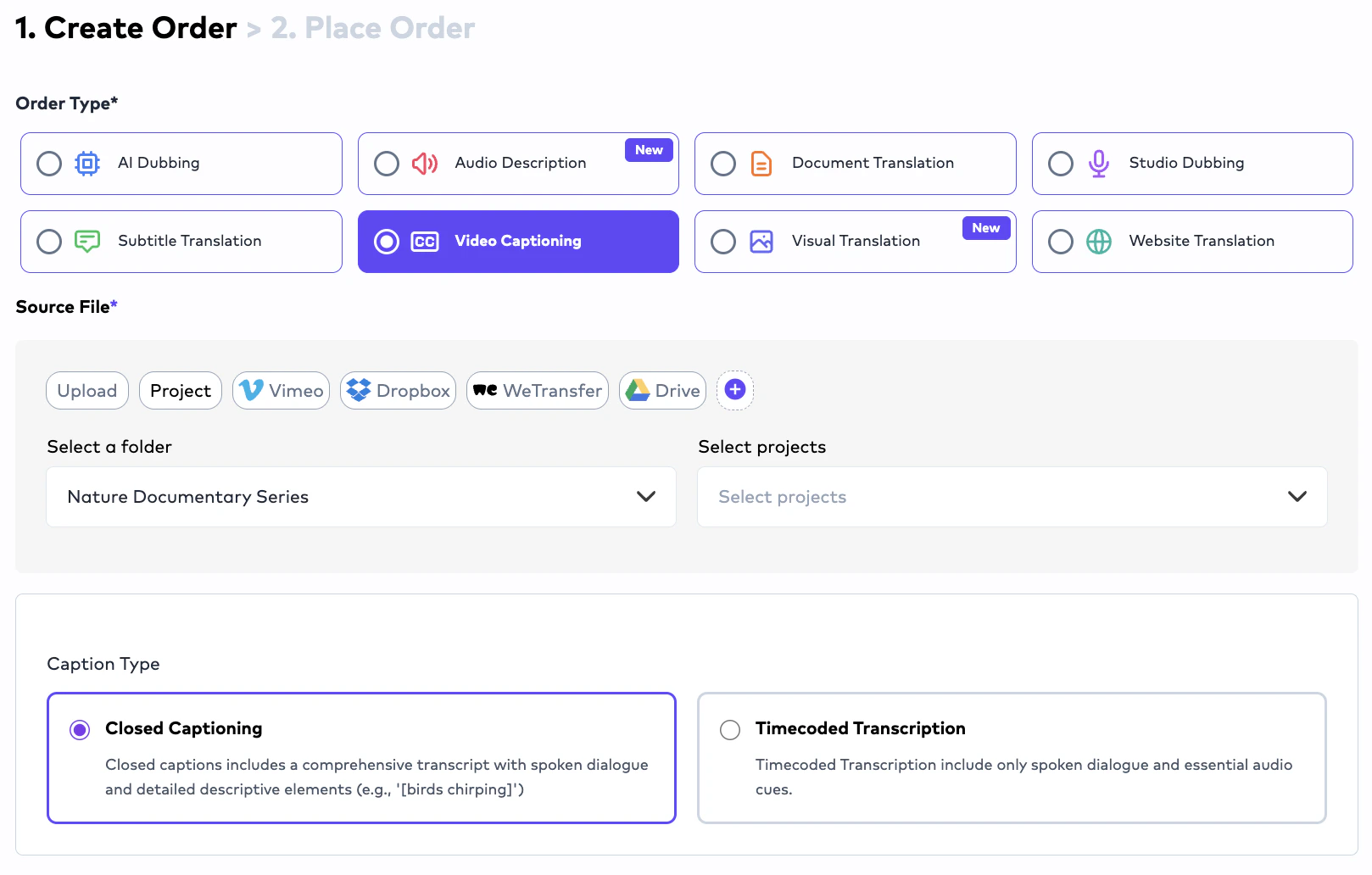

Video Captioning (CC)

Overview

Video Captioning (CC) workflows generate:- timed transcription files,

- subtitle-ready caption structures,

- and foundational subtitle assets.

- Subtitle Translation,

- AI Dubbing,

- or accessibility localization workflows.

Speaker Diarization

The platform supports:- speaker separation,

- speaker labeling,

- and timed segmentation.

- Speaker A,

- Speaker B,

- Speaker C.

- additional gender assignment logic may be utilized to improve voice synthesis quality.

Overlapping Speech Handling

When multiple speakers speak closely together:- the system attempts to separate speakers into distinct timed segments.

- combining multiple speakers into the same subtitle line whenever operationally possible.

- split segments,

- reassign speakers,

- and manually refine subtitle structures.

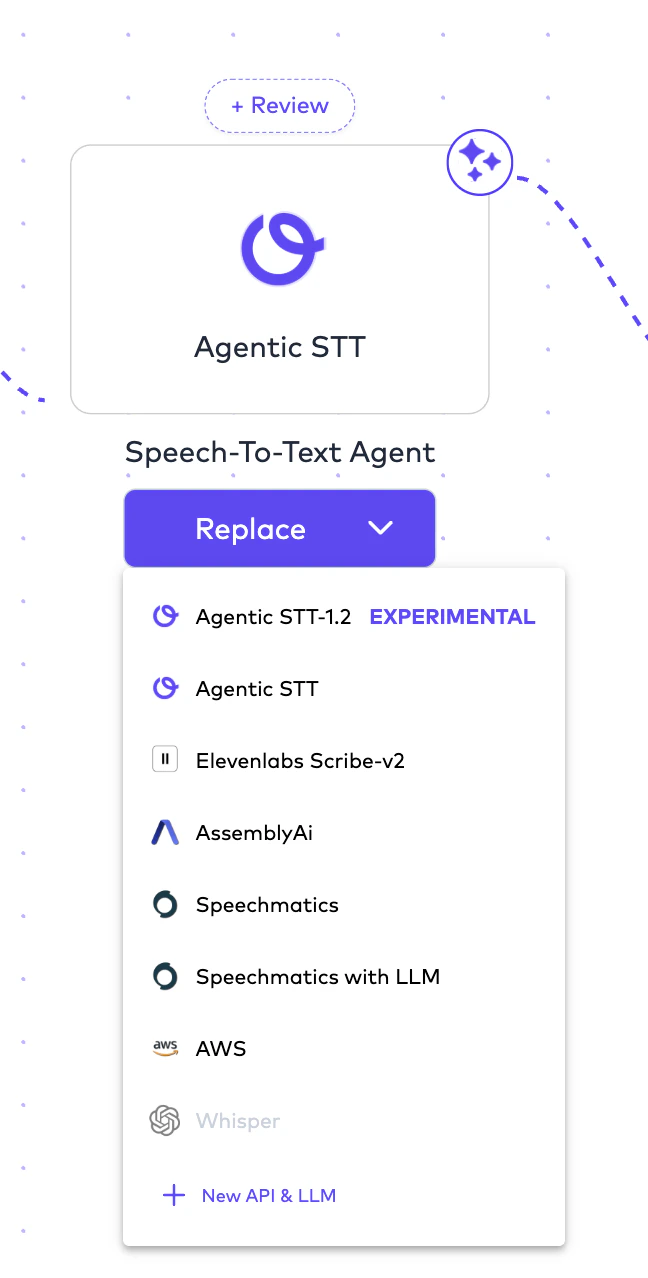

Multi-Provider STT Orchestration

Unlike platforms restricted to a single transcription provider, Ollang supports:- multiple speech-to-text workflows,

- and configurable provider orchestration.

- Ollang Agentic workflows,

- AssemblyAI,

- Speechmatics,

- AWS,

- and additional STT providers.

- transcription quality,

- diarization behavior,

- and language-specific performance.

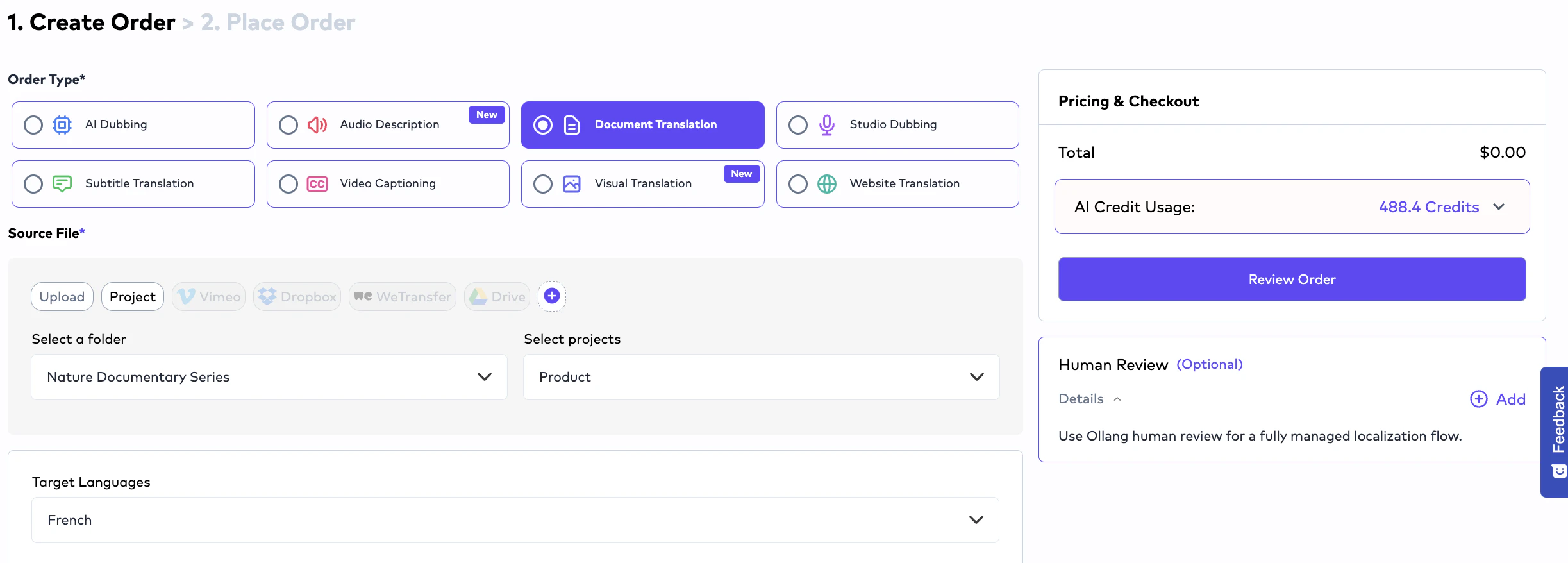

Document Translation

Overview

Document Translation workflows localize:- office documents,

- structured localization resources,

- multilingual documentation,

- and software localization files.

- simple office localization,

- and advanced structured localization pipelines.

Supported Document Formats

The platform supports:.docx

.pptx

.xlsx

.html

.xliff

.sdlxliff

.json

.po

.txt

.srt

.vtt

.pages

.key

.numbers

Structured Localization Support

The platform supports structured localization formats including:- XLIFF,

- SDLXLIFF,

- JSON,

- PO,

- subtitle assets,

- and HTML localization workflows.

- engineering teams,

- localization operations,

- and product organizations

Studio Dubbing

Overview

Studio Dubbing workflows are designed for:- professional dubbing studios,

- external localization vendors,

- enterprise voice teams,

- and production-based dubbing operations.

- voice recording occurs externally.

- the operational coordination layer.

Typical Studio Dubbing Pipeline

- dubbing vocals,

- final mixed outputs,

- Mixmaster files,

- and studio-generated deliverables.

- processes audio assets,

- coordinates operational workflows,

- and manages final delivery generation.

Important Studio Dubbing Rule

Delivery workflows require:- either final mixed outputs,

- or sufficient audio structure for operational Mixmaster generation.

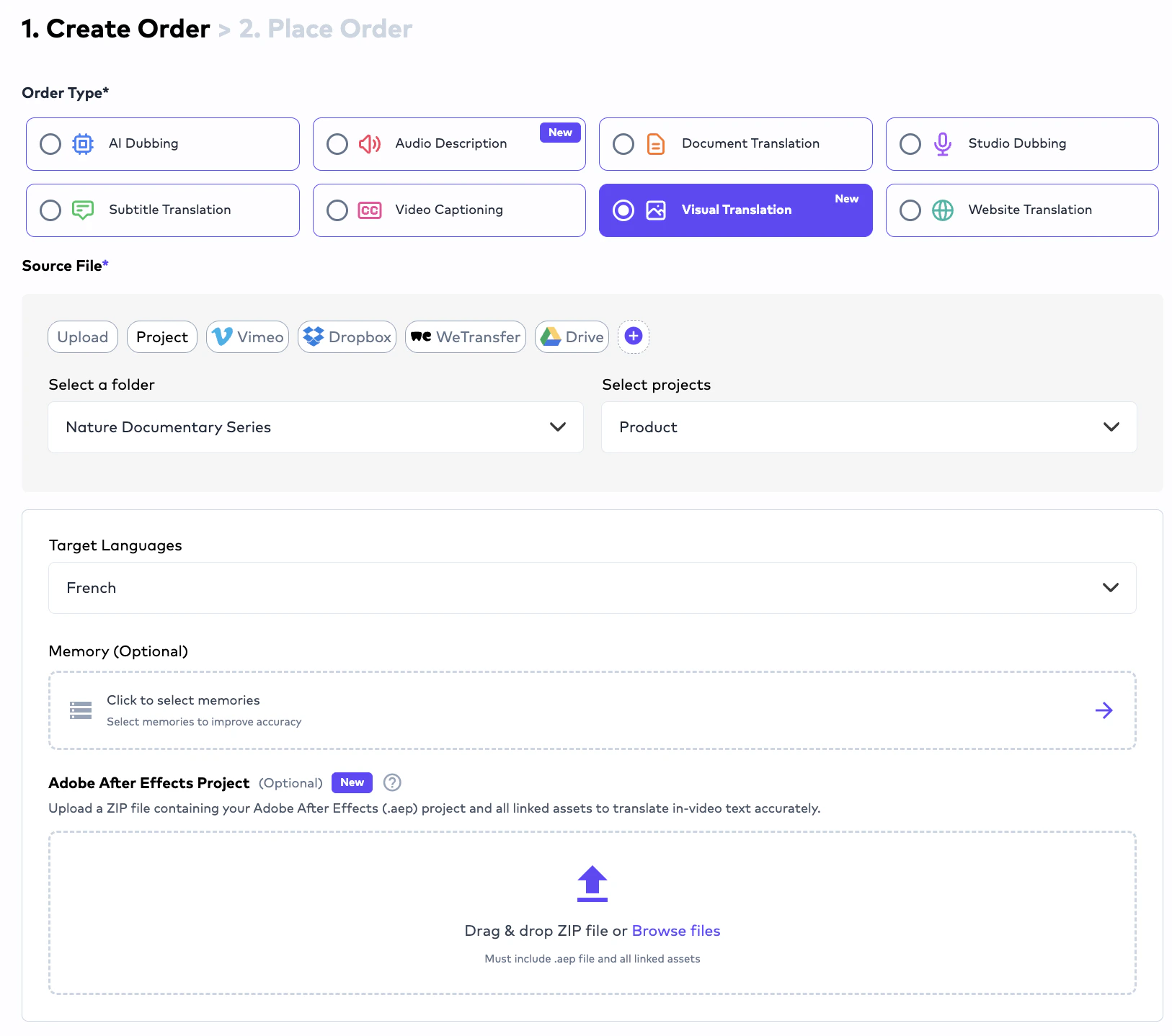

Visual Translation

Visual Translation workflows localize text that appears visually inside media assets. Unlike traditional subtitle or dubbing workflows, Visual Translation focuses on:- on-screen text,

- embedded graphics,

- banners,

- UI text,

- product screenshots,

- visual overlays,

- and image-based language elements.

- marketing campaigns,

- product videos,

- social media content,

- presentations,

- e-learning material,

- mobile app showcases,

- and multilingual visual content operations.

Supported Visual Translation Inputs

Visual Translation workflows support:Video-Based Visual Translation

Upload videos containing embedded visual text that requires multilingual localization.

Adobe After Effects Files

Upload Adobe After Effects project files for structured visual localization workflows.

Image-to-Image Translation

Translate visual text appearing directly inside images while preserving design structure.

Graphic Localization

Localize banners, graphics, presentations, and visual marketing materials.

How Visual Translation Works

Visual Translation workflows typically operate as:- detect visual text,

- preserve positioning,

- maintain formatting consistency,

- and localize embedded visual content.

Image-to-Image Translation

Visual Translation also supports:- image-based text localization.

- words embedded directly inside images,

- banners,

- screenshots,

- product visuals,

- presentations,

- or marketing assets

- layout,

- design consistency,

- positioning,

- and visual readability.

Adobe After Effects Support

Organizations may upload:- Adobe After Effects project files

- animated marketing videos,

- multilingual motion graphics,

- product launch campaigns,

- and enterprise creative localization.

Typical Visual Translation Use Cases

Common use cases include:- multilingual marketing videos,

- localized advertisements,

- product demonstrations,

- visual UI localization,

- social media campaigns,

- e-learning visuals,

- and multilingual creative operations.

Website Translation

Overview

Website Translation workflows enable organizations to localize websites into multiple languages using Ollang’s orchestration and MCP-based architecture. Rather than manually exporting website files, organizations can connect website workflows directly through: Ollang MCP integrations. This enables scalable multilingual website localization.How Website Translation Works

Website Translation workflows typically operate as:- localize websites into multiple languages,

- preserve multilingual consistency,

- reuse terminology memory,

- and operationalize global website localization.

Website Localization Capabilities

Website Translation workflows can support localization of:- website copy

- landing pages

- product descriptions

- metadata

- UI text

- multilingual navigation

- structured localization resources

- multilingual product experiences